You may think, at first, why do you even need Screenshots API mastery to take a screenshot? Is it really necessary if you can just press a button on your keyboard to do it?

In this article, we will discuss why you may want to use an API, the advantages, possible use cases, and, lastly, how to use Screenshots API to take any screenshot. Yes, we will provide a step-by-step guide for Screenshots API. Let’s roll!

Why use an API?

So, why API? The very short answer- automation. The long answer is there are a lot of things you can do with an API, and automation, especially for any repeatable tasks, is one of the solid reasons for growing Screenshots API usage. The simple fact that it is an API means it can be scalable and pretty reliable for any application that you may want to use it for. Also, with Screenshots API mastery, you can easily save an image of an entire webpage without the need to go to the actual website with a browser.

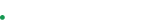

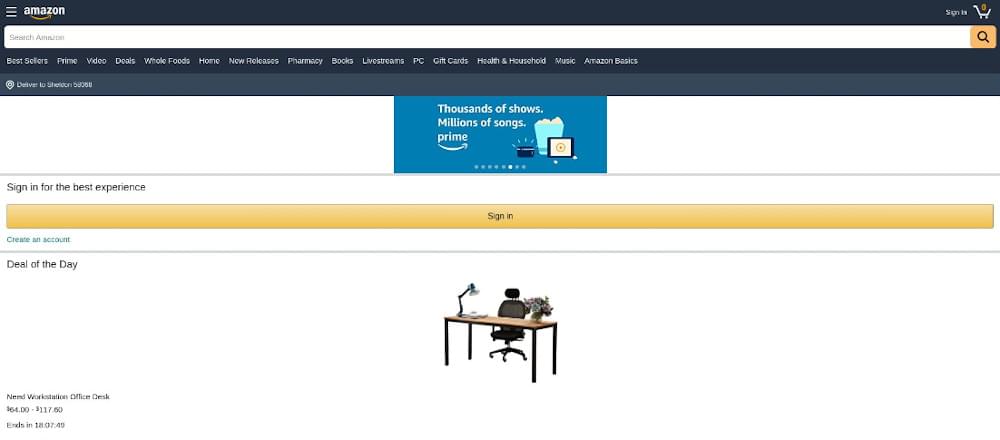

Take the image below as an example. With just one API call, you can download an exact copy of a webpage in seconds and save it to your local machine in JPEG or PNG format.

Most web pages nowadays do not fit on a single browser screen and may take you a few scrolls to get to the bottom part. So, saving an image of a webpage manually can take several minutes or longer since you will need to open your browser, go to the URL, wait for the webpage to load then take screenshots to capture multiple sections of the page and use an application where you can edit and reconstruct the entire image. It may be viable if you just want to save a small section of the page, but if you plan to capture lots of webpages, then it is surely going to be a waste of time when you can just write a simple code to utilize an API and automate the entire process and even get better results.

To further show you how useful screenshots API mastery is, we have listed down some of its most popular applications based on a wide range of users.

Screenshot API use cases

Take Web scraping into the next level - Screenshots API usage can greatly enhance your web scraping projects. There are various ways to take advantage of the capabilities of this kind of API and it can be easily integrated into any existing systems. Use it to validate if your scraper is getting the correct source code, capture thousands of screenshots in minutes and use it as another data point besides the usual texts that you can get from scraping, or even keep track of any website changes via the snapshots so you can quickly make some adjustments on your scraper if necessary.

For study and research - Wouldn’t it be best if you could just capture and download study and research materials you’ve found on various sites in a matter of seconds so you can focus on what really matters? As we have pointed out earlier, saving snapshots of web pages manually will take so much of your time and effort that it is hard to justify doing it in the first place. Using an API to automate this task will make much more sense and can significantly reduce your workload. Saving copies of online research papers, books, and useful articles will be a breeze and can greatly increase its accessibility since images can be saved on a local hard drive or the cloud.

Perfect for bloggers, content creators, and web developers - It is indeed a simple API but can be a very effective one in the hands of professionals. If you are writing a review or making a list of websites for any reason, the API can capture a perfect image of any web page, and including that image in your article can improve user engagement. For web developers and freelancers who want to showcase their portfolio, Screenshots API usage is almost a must if you have built multiple websites, as it can flawlessly take screenshots of your work with minimal effort in the best resolution possible.

Using Crawlbase’s Screenshots API

There are a lot of websites that offer an automated screenshot API, and finding the right one for you may be troublesome. But you do not need to look any further, as Crawlbase is currently offering one of the best API screenshot services with a built-in anti-bot detection that can bypass blocked requests and CAPTCHAs.

By using this Screenshot API, you can stay anonymous as the API is built on top of thousands of residential and data center proxies managed by artificial intelligence, so you can always get a flawless and high-resolution image of any website you want.

Using the API is easy, as every request will start with the following base part:

1 | https://api.crawlbase.com/screenshots |

Crawlbase will provide a private token upon creating an account that is required to use the service:

1 | ?token=PRIVATE_TOKEN |

Executing a very simple curl command on the terminal or command prompt will allow you to crawl any webpage and save the image to any compatible file type of your choice:

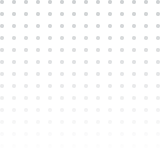

1 | curl "https://api.crawlbase.com/screenshots?token=PRIVATE_TOKEN&url=https%3A%2F%2Fwww.amazon.com%2Famazon-books%2Fb%3Fie%3DUTF8%26node%3D13270229011" > test.jpeg |

The result will be a clean image of the entire webpage in the best resolution possible and without the unnecessary section of a web browser, like the scroll bar and the address bar:

Now, if you want this to scale and fully automate the process, you can build your code using any of your favorite programming languages. Crawlbase has libraries that are free to use which will allow uncompromising integration to any existing systems. It is also pretty straightforward if you want to create your project around the API.

Below is a simple demonstration of how you can use the Screenshots API with the Crawlbase Node library:

1 | const { ScreenshotsAPI } = require('crawlbase'); |

In addition to the default function, the API has optional parameters that you can utilize based on your needs:

- device - pass this parameter if you wish to capture the image on a specific device. The options available are

desktopandmobile. - user_agent - use this if you want a more specific option than the device parameter. This is a character string that lets you pass a custom user agent to the API.

- css_click_selector - this parameter will let you instruct the API to click an element in the page before the browser captures the resulting web page. The value should be a valid CSS selector, for example,

.some-other-buttonor#some-button, and should be fully encoded. - scroll - use this for websites with infinite scrolling. The API will scroll through the entire page before capturing a screenshot on a set scroll interval. The default scroll is 10 seconds but can be set to a maximum of 60 through its subparameter

scroll_interval=value. Example:&scroll=true&scroll_interval=20 - store - accepts boolean

&store=trueto store a copy of your screenshot straight into the Crawlbase’s Cloud storage.

Passing any of the available parameters to the API, is as easy as shown below:

1 | const { ScreenshotsAPI } = require('crawlbase'); |

The result will be the mobile version of the website:

How to Use Screenshots API for Business Growth?

The Screenshot API is a handy tool for businesses to automatically take and save screenshots of web pages.

Screenshot API mastery helps businesses work faster, save time, and cut down on manual work.

Now that you have gone through the above-mentioned step-by-step guide for Screenshots API using Crawlbase, let’s look at three useful ways your business can use the Screenshot API and how it can help your company:

Cut Costs and Boost Efficiency with Customer Support

Customer support can take up a lot of time and money for businesses. It’s crucial to give customers a good experience, but it can be tough to do that quickly and accurately, especially with many products and services to manage.

One way to cut down on the time and cost of customer support Screenshots API usage. This API lets businesses easily take screenshots of web pages or services. They can then include these images in their documentation and user guides.

With these screenshots on hand, businesses can show customers what they need visually without needing someone to explain it every time. This speeds up customer support and saves money.

Plus, having visual examples makes it easier for customers to understand complex processes. This reduces frustration for both customers and employees, leading to happier customers.

The Screenshots API usage is simple, so businesses don’t need to spend extra time or resources learning it. They can focus on providing great support instead.

Using the Screenshot API is a smart way for businesses to save time and money on customer support while still offering excellent service. With images of web pages ready to go, businesses can spend less time on support and more on other important tasks. And because the API is easy to use, there’s no need to waste time learning complicated tools. Overall, the Screenshots API mastery is a valuable skill for any business looking to improve customer support.

Improve Your Marketing Conversions

One effective way to enhance your business with Screenshots API usage is by incorporating it into your marketing strategies. By utilizing screen capture APIs, you can swiftly capture screenshots of websites or web applications, allowing you to create captivating visuals for your marketing campaigns.

These visuals can grab the attention of potential customers and entice them to visit your website or explore your product offerings. Moreover, utilizing a web API for taking screenshots is significantly quicker than manual methods, enabling you to produce visuals at a larger scale with minimal effort.

Additionally, screen capture APIs offer customization options, allowing you to tailor the screenshots to suit your specific marketing requirements. Whether you need high-resolution screenshots of your website or quick previews of your app, the API provides the flexibility to capture accurate and visually appealing images.

Enhance Your Team’s Efficiency

The Screenshots API mastery can greatly boost your team’s productivity. By utilizing this RESTful API, you can effortlessly capture screenshots of any web page or service, providing immediate access to images that can aid in testing, analysis, and other tasks. With a screen capture API, you can swiftly capture screenshots, saving valuable time and resources.

For instance, if your team is responsible for testing a new product or website, the Screenshots API usage can assist them in efficiently capturing screenshots for evaluation. These images can help assess the layout and design, ensuring everything functions as intended. Additionally, the screenshots can be utilized for quality assurance purposes, verifying that the product meets required standards prior to launch.

Using the Screenshot API is simple. Just make a straightforward call to the API with the URL of the webpage you wish to capture, and within seconds, you’ll have a screenshot ready for use. With its user-friendly interface, capturing screenshots has never been easier. So, if you’re looking to streamline testing and analysis tasks while saving time and money, learn how to use Screenshots API and give it a try.

Conclusion

There is no doubt that doing the same task over and over again can be tedious. So, if it is a repetitious task, like taking tens, hundreds, or even thousands of website screenshots, then the best choice would be to automate it using an API. Not only can it save time, but it can also provide better and more consistent results.

Crawlbase’s Screenshots API is one of the best choices in the market right now as it provides an easy-to-use service that also offers great flexibility due to its features and exceptional reliability. Every API call utilizes IP from a vast pool of proxies. It is optimized by artificial intelligence so you can stay anonymous and avoid bot detection while capturing a website’s image in high resolution.