Proxy vs API to scrape websites

What is better for website scraping a Proxy or an API?

Web scraping has been trending more in the last few years. There are many websites and internet products that interest data scientists. Many websites have various anti blocking techniques, such methods make it harder for developers to scrape the data visible in the public pages. Developers tend to avoid this problem of running into blocked requests by using a set of proxies. There are many different kinds of proxies of which HTTP/S and SOCKS5 proxies are the most commonly used. As a developer you can purchase a set of residential or datacenter proxies and use them in your application to avoid your scraper being detected. Companies that rely on websites public data for their products normally keep maintaining small or big proxy lists.

Dedicated or shared proxy costs vs websites APIs

There are few factors that make prices high or low for proxies. In general, shared proxies are cheaper than dedicated proxies. Shared proxies are proxies that are used by many users at the same time, there are several providers that give you access to shared proxy pools. The quality of shared proxies in comparison to dedicated ones is definitely lower but that also depends on your target website. You need to test different proxy solutions and see what is the cost vs quality ratio, at the end if the value you get from using shared proxies is good for your product then you should use shared proxies as that becomes cost efficient.

Another factor that changes the prices of proxies are them being residential. Residential proxies up until today are considered of higher quality. Now you could argue why that is the case, many times you find residential proxies slower, the main reason they are more expensive is because there are human beings that are using that same IP address that is going out to the internet so the footprint of your scraper would become less prone to be detected as a bot, also some websites block data center IPs so again that is something you need to play with when building or scaling your web scraper.

Many websites especially those that offer products give API access to their product data, there are many public free to access APIs for several products, you only need to use them by requesting access to the website. If the data that you can get from websites public APIs is sufficient for your use case that could save you the costs of using proxies. Nowadays APIs are becoming more popular, many websites have public free APIs. Double check your target website if it offers an API to use and make your web scraper have consistent data without extra proxy costs. Some specific product websites have developer APIs for data but that could be expensive to use and eventually you would scrape the target website using proxies to reduce your product costs.

Website data APIs vs data from web scraping

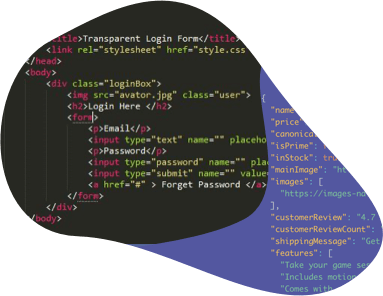

Websites normally offer data APIs in JSON, CSV or XML response formats. The data that comes is structured and it is not the same as the data your web scraper can get from screen scraping a public HTML page of a target website. Many factors make the data different, here we mention a few:

- APIs give generic structured data of which the company allows to be used by others freely.

- Scraping HTML pages gives you the data that you as a user of a given website see visible.

- Web scraper data intelligence and learning capabilities vary from one scraper to another.

- Accessing APIs is guaranteed to give you data but via web scraping it might not be the case if proxies are blocked.

Proxy location importance while website scraping

Have you ever wondered why your proxy requests to a website that is only available in the USA are getting permission errors?

The location of your proxy is important when scraping websites and here we provide you some reasons:

- Websites might be available in the United States only and sending your requests from other continents will not work.

- Websites especially those offering products to sell, have targeted product descriptions, prices and delivery locations.

- If you request a website from an IP address that is not a common target location then you are likely to be presented with an error page and you need to find which location can work better for scraping your target website.

Switch your websites scraper to use Crawlbase API

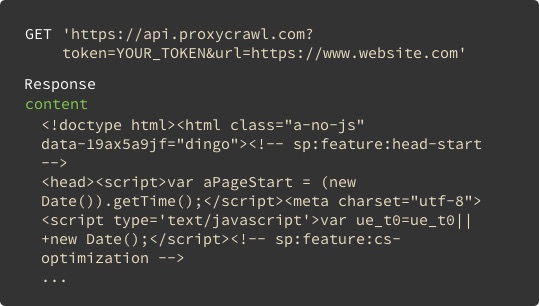

At Crawlbase we go the extra miles to access data from various websites and provide you a simple GET request to our API.

We provide you an endpoint, you make calls to it. That's it, no extra step required.

Here are the main benefits of using our API vs websites APIs vs shared or private proxies:

- Crawlbase API is public and can be used for various project volumes.

- Crawlbase API offers different features, like taking screenshots, storing results, and generic website scraping.

- Crawlbase API allows you to receive raw HTML data as it appears in original sites.

- Crawlbase API is a wrapper to any website, your proxy list will not be needed anymore.

- CAPTCHA pages or banned IP addresses becomes a problem in history.

- Chrome and headless browsers are available when using the Crawlbase API.

- Your product can grow faster with our engineers backing you up.

Start for free and scale when you need it

Simple pricing

For small and big projects without hidden fees (see pricing)

No long-term contracts

Pay as you use. If you don't use, you don't pay

Completely free to start

First 1000 requests are completely free